Databricks lakehouse architecture is genuinely powerful: it unifies data engineering, analytics, and machine learning in a single environment built on Apache Spark, Delta Lake, and Unity Catalog. But that power only shows up when the implementation is done right, the pipelines are built for how your data actually moves, and the governance is in place before the compliance team asks for it.

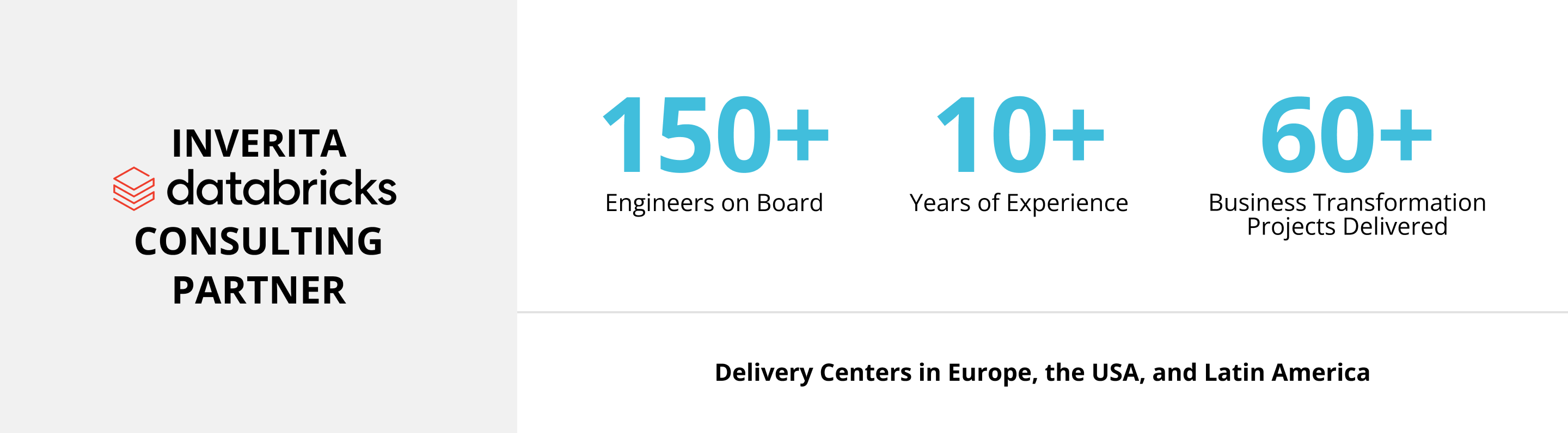

inVerita is a Databricks consulting partner with dozens of successful projects delivered which means when a client asks us whether Databricks is the right choice, we give an honest answer rather than the answer that benefits our practice.

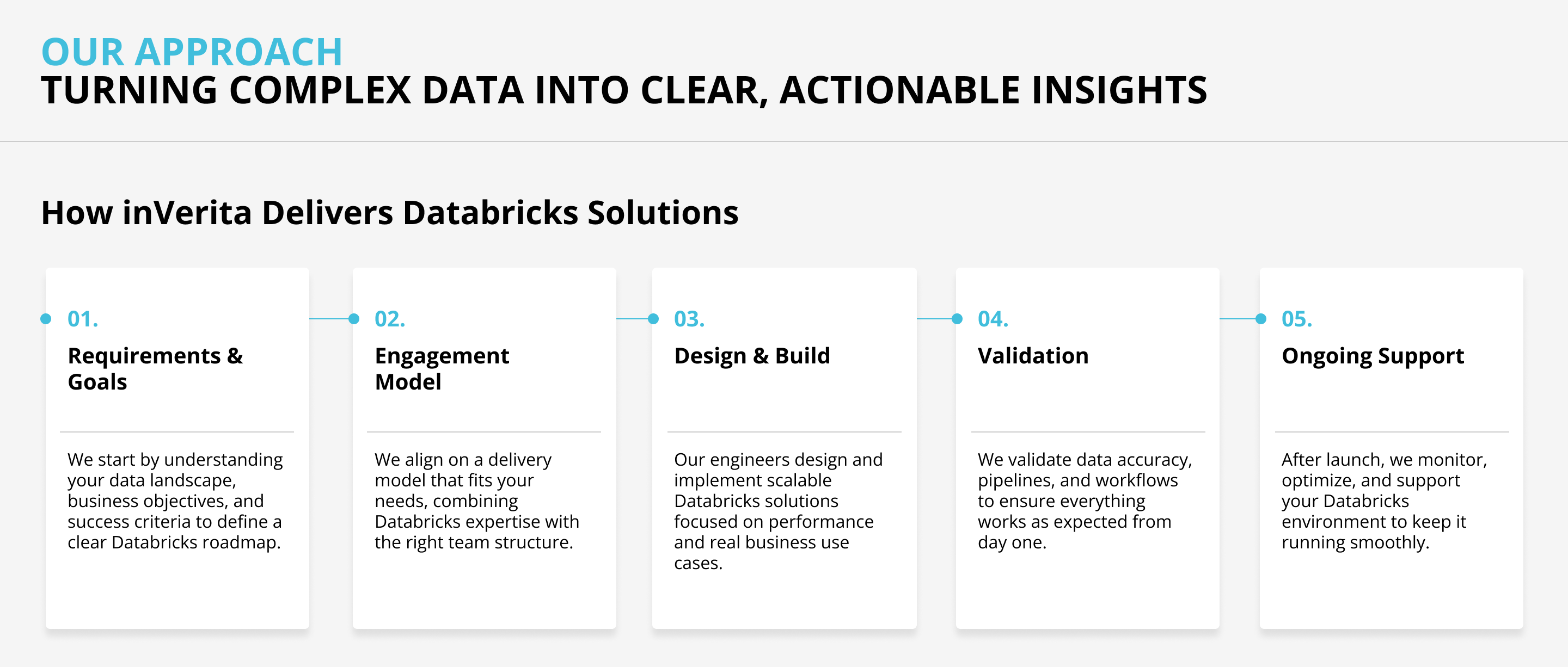

Our Databricks development services and Databricks consulting services span the full platform lifecycle: architecture, migration, Databricks data engineering services, MLOps, Unity Catalog governance, cost optimization, and long-term Databricks managed services.

We've built Databricks environments from scratch, migrated organizations onto the platform from Hadoop, Snowflake, and Teradata, and optimized environments where costs had grown well beyond what the business expected.