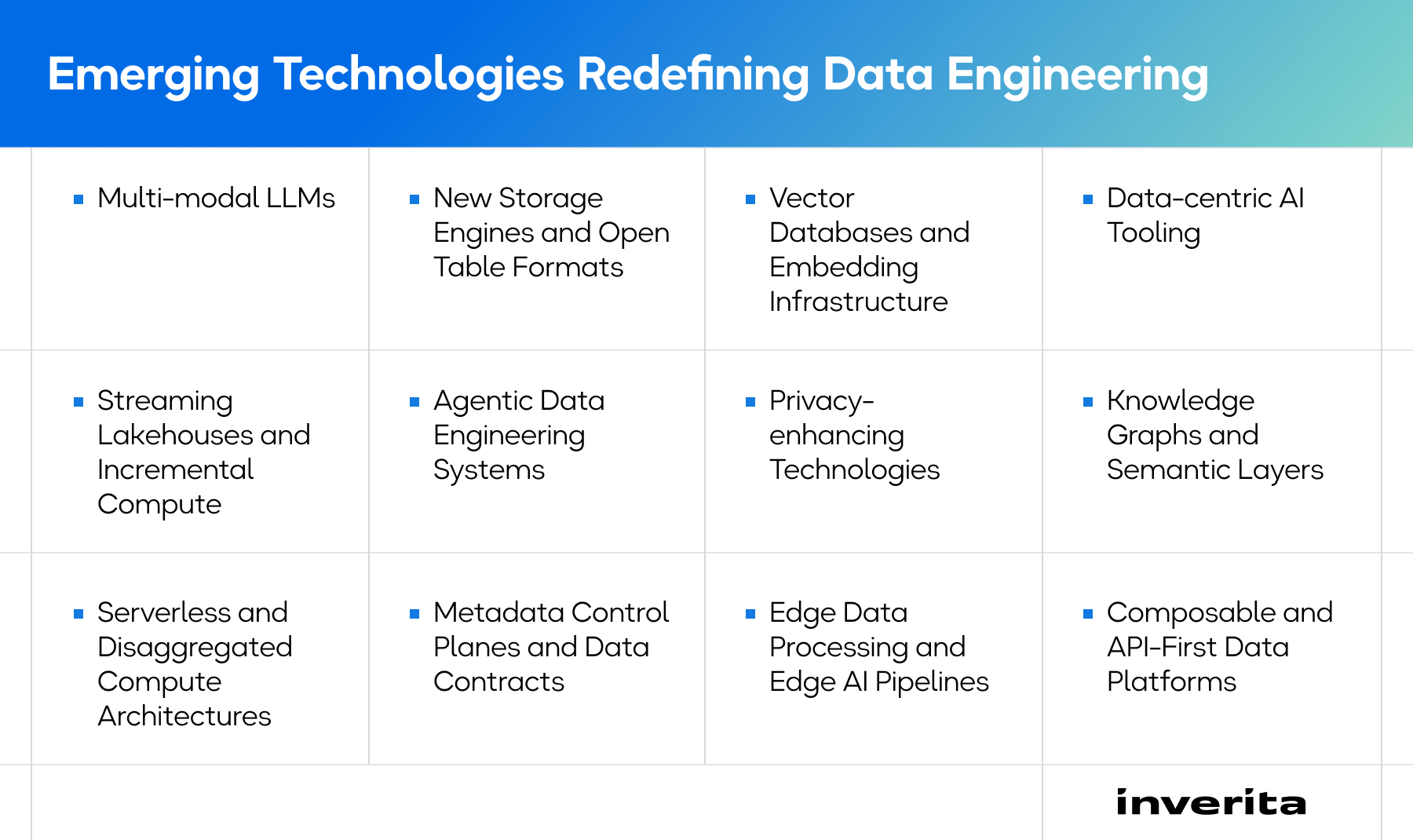

Multi-modal LLMs

Models that process text, images, audio, video, and structured data simultaneously, redefining how pipelines prepare and serve training and inference data.

New Storage Engines and Open Table Formats

Next-generation storage layers (Iceberg-native engines, disaggregated storage-compute architectures, vector-aware storage) optimized for AI, real-time workloads, and lakehouse interoperability.

Vector Databases and Embedding Infrastructure

Vector databases store embeddings – numerical representations of text, images, or other data – powering semantic search, recommendation systems, retrieval-augmented generation (RAG), and AI-enabled solutions.

Data-centric AI Tooling

Instead of focusing only on model tuning, organizations are optimizing data quality and structure with the help of such tools as automated labeling, synthetic data generation, feature stores, and data versioning platforms.

Streaming Lakehouses and Incremental Compute

Modern platforms unify batch and streaming processing in a single architecture, allowing for real-time ingestion, continuous transformations, and incremental computation (processing only new or changed data). This supports live dashboards, fraud detection, and operational AI without maintaining separate systems.

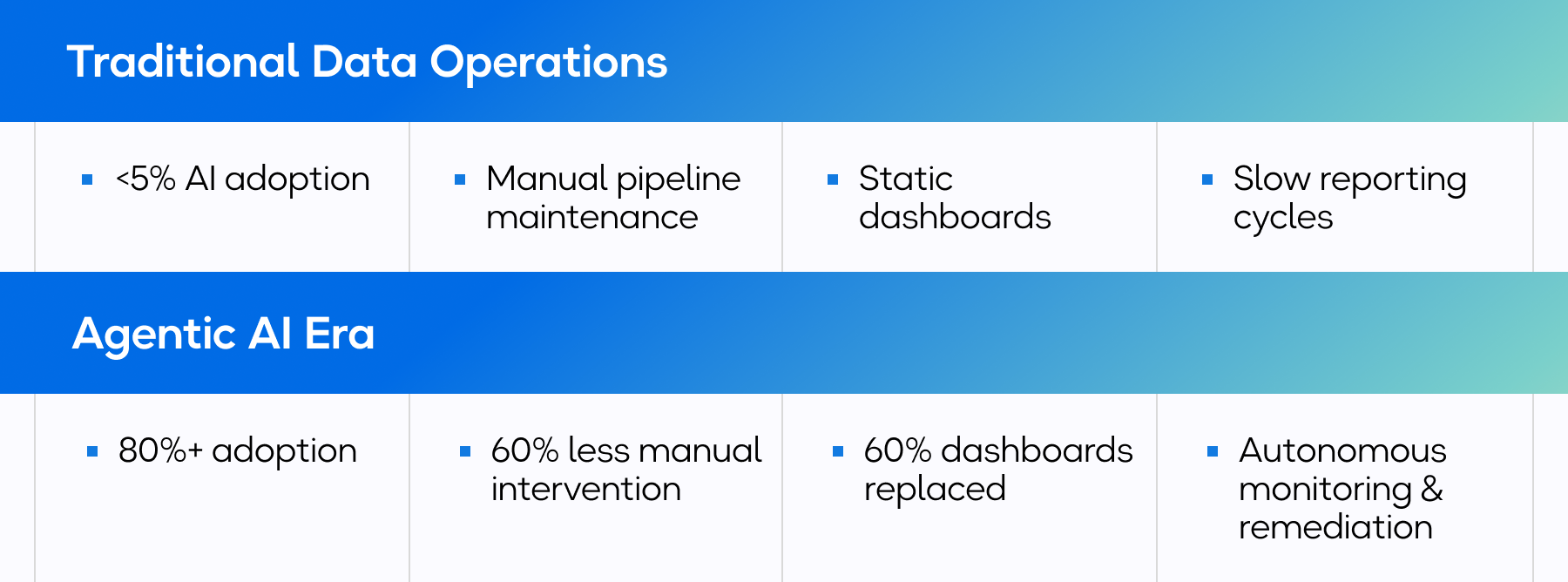

Agentic Data Engineering Systems

Advanced AI agents start contributing to data engineering – they are capable of generating SQL and transformation logic, detecting broken pipelines, suggesting optimizations, and auto-remediating issues. This results in less manual intervention and shorter development cycles.

Privacy-enhancing Technologies (PETs)

PETs allow organizations to analyze and share data without exposing sensitive information. Technologies like federated learning, differential privacy, secure multi-party computation, and confidential computing are particularly critical in regulated industries for privacy-first analytics.

Knowledge Graphs and Semantic Layers

Knowledge graphs connect data entities through relationships (e.g., customer → product → transaction), while semantic layers standardize business definitions across systems (e.g., what counts as “active user” or “revenue”). This combination standardizes business logic across analytics and AI systems.

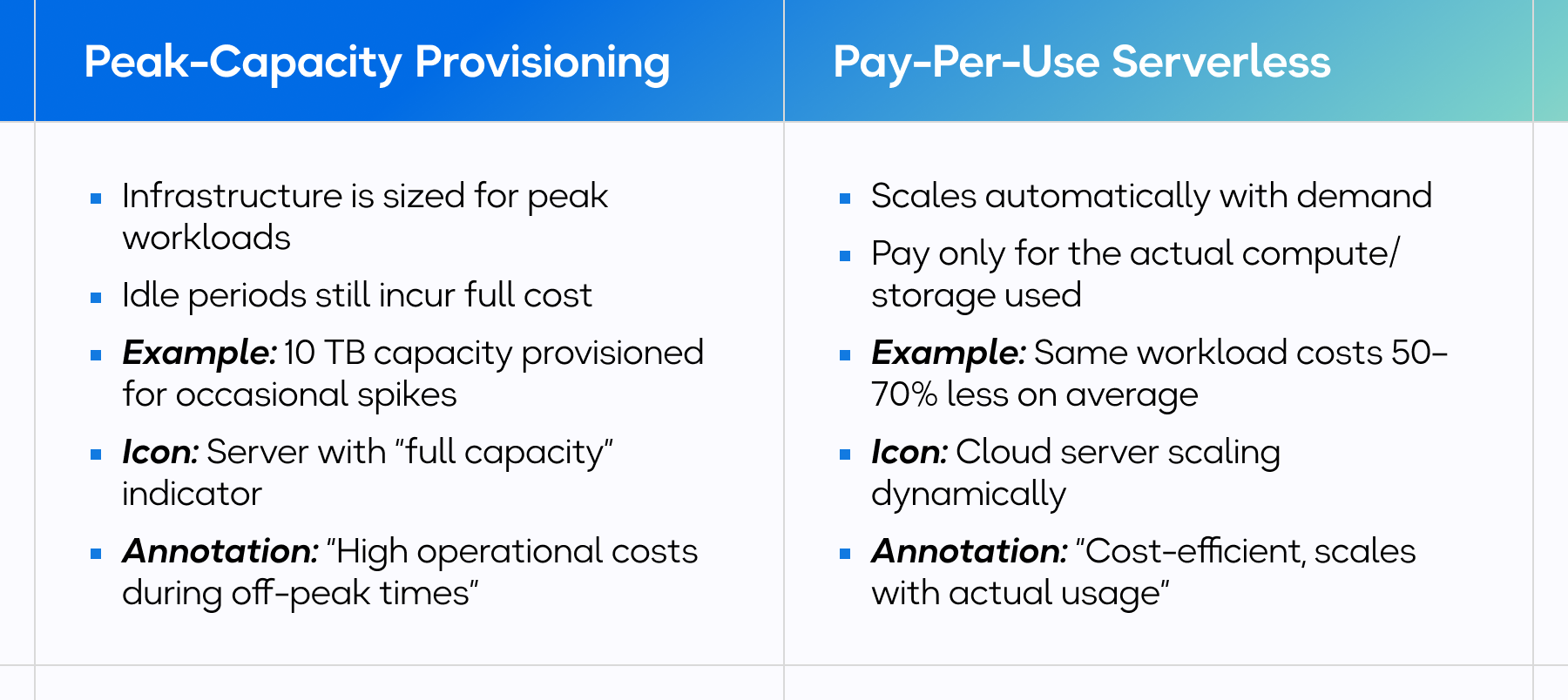

Serverless and Disaggregated Compute Architectures

Fine-grained, pay-per-query compute engines separate storage, processing, and orchestration for cost efficiency and scalability, especially in the case of unpredictable workloads.

Metadata Control Planes and Data Contracts

Metadata is becoming the control center of modern data platforms. Centralized metadata systems provide unified visibility into schemas, lineage, ownership, data quality, and dependencies across distributed environments. Data contracts complement this foundation by formalizing expectations between data producers and consumers. By defining structure, quality standards, and change management rules upfront, they prevent breaking changes, reduce downstream disruptions, and improve overall system reliability.

Edge Data Processing and Edge AI Pipelines

Instead of sending all data to the cloud, processing happens closer to devices (IoT sensors, factories, vehicles). This results in lower latency, reduced bandwidth costs, and fosters real-time decision-making – critical factors for industrial AI and smart infrastructure.

Composable and API-First Data Platforms

Composable platforms use modular components connected through APIs, allowing for vendor flexibility, multi-cloud strategies, easier system replacement, and faster innovation. As a result, organizations can avoid vendor lock-in and adapt more quickly to new technologies.

Industry Use Cases of Data Engineering Trends

The role of data grows immensely across industries. From medical facilities to entertainment corporations, organizations are adopting emerging data engineering trends to address their niche operational challenges and market requirements.

Below, take a look at some practical examples of how data engineering is used.

Healthcare

Streaming pipelines ingest data from wearables, hospital monitors, and EHR systems. Lakehouse architectures unify structured clinical records with unstructured notes, imaging, and genomics.

Use Cases

- Continuous patient vital monitoring

- Early risk detection and predictive deterioration models

- Precision medicine and research analytics

Business Impact

- Reduced emergency interventions

- Lower care costs

- Improved patient outcomes

- Faster clinical research insights

Retail and E-commerce

Streaming analytics track browsing, purchase behavior, mobile app interactions, and in-store activity to build unified customer profiles.

Use Cases

- Real-time product recommendations

- Dynamic pricing optimization

- Customer lifetime value modeling

- Demand forecasting

Business Impact

- Higher conversion rates

- Improved inventory planning

- Increased customer retention

Finance

AI models analyze transactions in milliseconds, supported by metadata-driven audit trails and automated reporting pipelines.

Use Cases

- Real-time fraud detection

- Risk modeling

- Automated compliance reporting

- Anti-money laundering (AML) monitoring

Business Impact

- Reduced fraud losses

- Fewer false positives

- Faster regulatory reporting

- Stronger compliance posture

Logistics and Supply Chain

IoT sensors, GPS, and weather data feed edge and cloud analytics systems. Predictive models adjust routes and forecast demand dynamically.

Use Cases

- Route optimization

- Real-time shipment tracking

- Predictive fleet maintenance

- Inventory optimization

Business Impact

- Lower fuel costs

- Higher on-time delivery rates

- Reduced downtime

- Optimized working capital

Media and Entertainment

Real-time data warehouses continuously process viewer interactions. AI models trigger retention campaigns when churn risk increases.

Use Cases

- Real-time content recommendations

- Churn prediction

- Streaming optimization

- Audience analytics for content strategy

Business Impact

- Improved subscriber retention

- Higher engagement

- Faster content iteration cycles

What the Future of Data Engineering Could Look Like

Given current trends in data engineering, its future is expected to center on autonomous, AI-driven systems that require minimal human intervention for routine operations, while accelerating innovation and enabling business responsiveness. Organizations will shift from managing infrastructure to designing intelligent data products that automatically serve business needs.

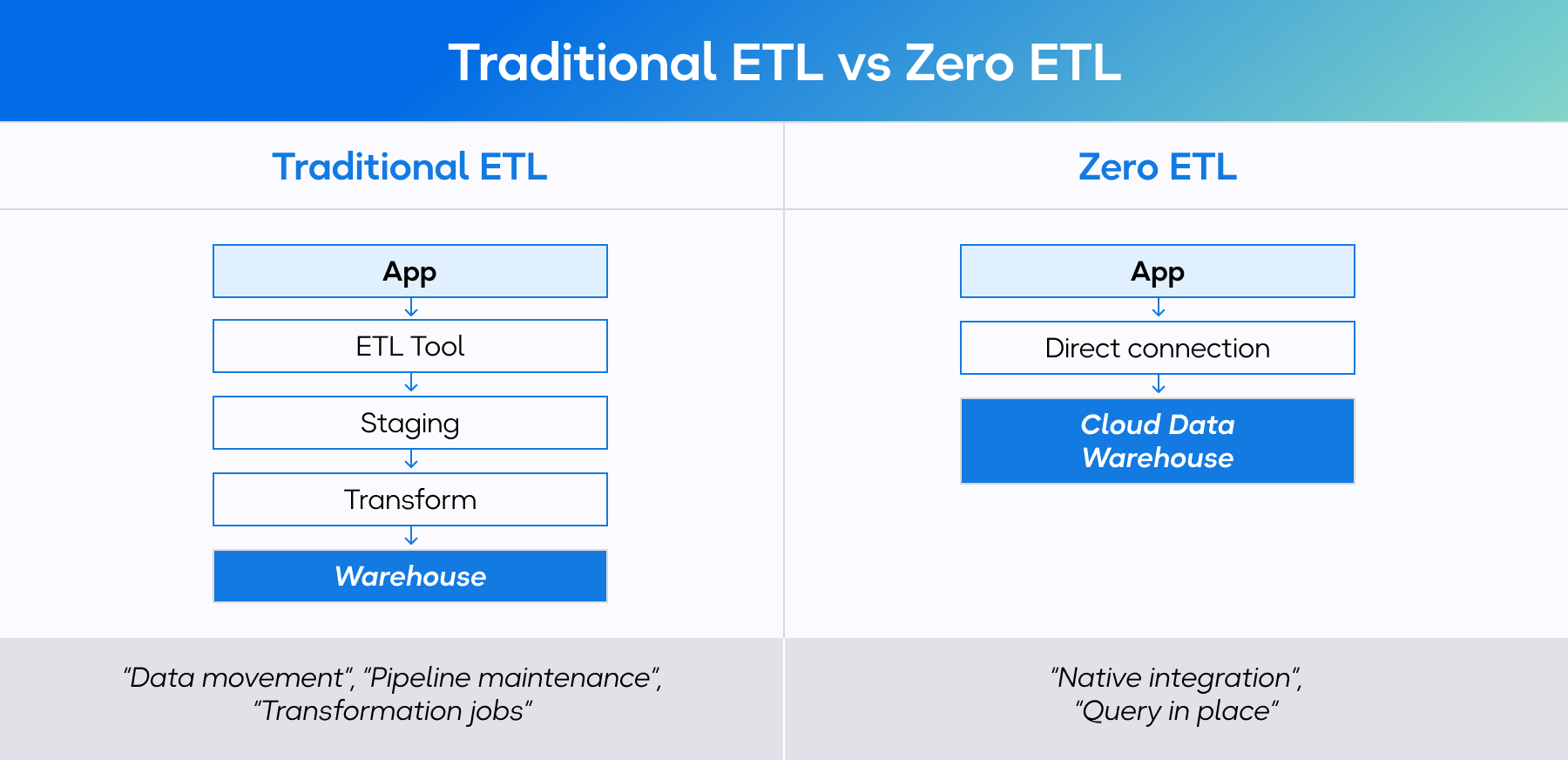

As data volumes continue to surge, AI-powered ELT processes, direct integrations, and scalable lakehouse platforms will become foundational. Generative AI, though still evolving, is already streamlining development cycles, improving accessibility, and embedding intelligence across the entire data lifecycle.

Alongside these transformations, the role of a data engineer will evolve too – it already ranks among the fastest-growing roles with U. salaries going up to over $220,000. Data engineers will be expected to increasingly collaborate with data scientists and AI teams to support advanced analytics and machine learning initiatives.

Future architectures will be hybrid and composable, blending on-premise and cloud environments for flexibility and regulatory alignment. Sustainability will also become a priority, driving the design of more energy-efficient data systems.

Ultimately, the future of data engineering is less about pipelines and servers – and more about intelligent, automated, and scalable data ecosystems that fuel continuous innovation.

Conclusion

With the state of the industry today, we can state with confidence that the future of data engineering presents many promising opportunities, evolving with the focus on autonomous AI integration, real-time processing, and self-service analytics. Adopting these advanced technologies and modern practices can provide businesses with a strong competitive advantage. However, the success of such initiatives depends on a well-defined strategy and expert execution.

Backed by a team that possesses deep expertise in designing, configuring, and integrating modern data engineering solutions, you can transform raw data into a strategic asset.

At inVerita, we work closely with clients to ensure every solution is guided by a deep understanding of their unique business needs and challenges. We help companies embrace emerging data engineering trends today, so they can position themselves to lead in the data-driven future.